LLM + PII Security Framework

PII, LLMs, and Third-Party AI SaaS in Financial Services: A Practical Security Spectrum for Enterprise Architecture

One of the most common AI use cases in financial services and insurance right now is using LLMs to provide services to customers. On paper, it sounds straightforward: a customer interacts through chat or voice, gets faster answers, receives better support, and ideally has a better experience than traditional channels. But in enterprise environments, the actual architecture gets layered very quickly.

In many real-world implementations, the front-end experience is delivered through a third-party SaaS platform, while the enterprise’s own systems remain on the backend. That means the customer interaction layer, orchestration, model interactions, and some runtime components may sit outside the enterprise boundary, while policy administration, claims, billing, customer platforms, and document systems remain inside. In regulated industries like financial services and insurance, that creates an immediate and valid concern: how is PII handled across every step of that chain?

This is not just a technical concern. It is a compliance concern, a trust concern, and increasingly a business risk concern. Organizations in these industries cannot afford vague answers when it comes to personally identifiable information. They need to be able to explain, with confidence and evidence, that customer data is safe and not being exposed inappropriately while LLMs are being used to deliver services. That means AI and agentic application architectures cannot be built on assumptions or vendor marketing language alone; they have to be grounded in accepted patterns, strong controls, and reviewable design decisions.

This article is my attempt to make sense of that problem in a way that is practical.

I spent time reviewing guidance from NIST AI-related resources, OWASP, CSA, and cloud security documentation from major providers to understand what the real risk areas are, which controls actually matter, and how they should be implemented in practice. The information was useful, but it came in different forms and from different angles—privacy, AI risk, application security, cloud controls, and operational governance. At some point, I realized I needed a mental model to keep it all straight. I started building one, and I kept refining it as I learned more.

What I’m sharing here is the result so far: a practical security spectrum and matrix that helps structure the conversation around LLMs, PII, and third-party AI SaaS in regulated enterprise environments.

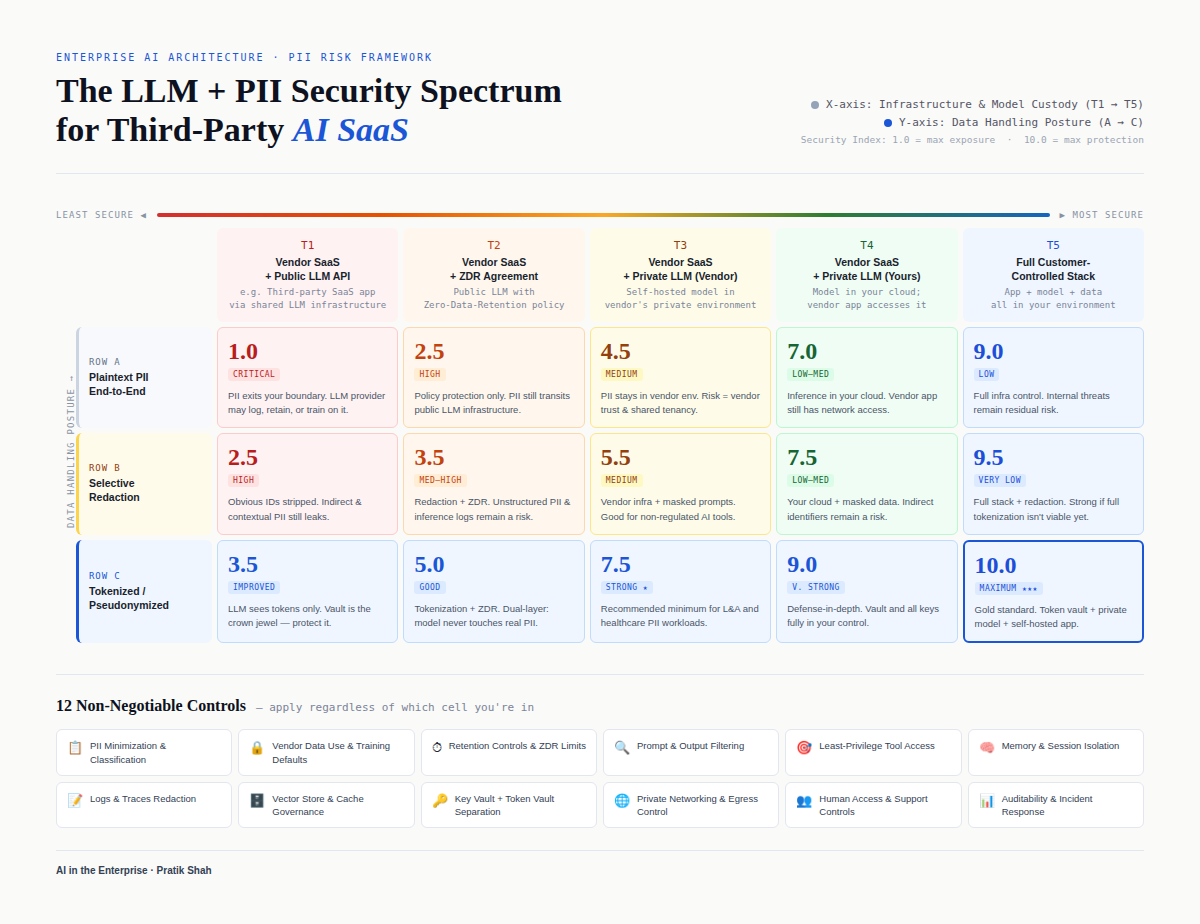

Here’s the LLM + PII Security Spectrum and Matrix

Framework by author, Visual: Claude

Why “public vs private LLM” is too simplistic

A lot of discussions still frame this as a simple comparison. If a solution uses a public model, it is treated as risky. If it uses a private model, it is treated as safe.

That framing is understandable, but it is too simplistic to be useful in real architecture or security reviews.

The actual risk is not determined only by which model is used or where it is hosted. It is also shaped by where the application runtime lives, how data moves through the system, what gets logged or retained, how memory and state are handled, which tools the agent can invoke, what support personnel can access, how long data persists, and where plaintext PII is visible in the end-to-end flow. In other words, the security posture of an LLM-enabled customer service system is a function of architecture, controls, and operations—not just model location.

That is why I found it more useful to think in a spectrum rather than a binary, and more useful to think in more than one dimension.

The first dimension: trust boundary and deployment custody

The first part of the model is a deployment and trust-boundary spectrum. This is closest to how most teams initially think about the problem, and it is still important. At one end, the enterprise is using a vendor SaaS platform that calls external shared LLM APIs, which means a wider trust boundary and less direct control over the runtime path. As you move along the spectrum, you may see dedicated or single-tenant model hosting, then vendor SaaS using a private or BYO model deployed in the customer environment, then the vendor application itself deployed in the customer environment with a private model, and eventually a mostly or fully customer-controlled stack where the application, model, and data plane sit inside the enterprise boundary.

This spectrum is useful because it clarifies who controls what. It helps answer practical questions about runtime ownership, networking, storage, observability, secrets, support access, and operational boundaries. In general, moving toward more enterprise control can reduce third-party custody risk. But it does not automatically make a system secure—it only narrows the trust boundary. The outcome still depends on design and execution.

The second dimension: how much PII is actually exposed in the pipeline

The second dimension is the one that changed how I think about this problem. Even if the deployment model looks strong on paper, the architecture can still be weak if raw PII is visible throughout the pipeline. A system can be “private” from a hosting standpoint and still be unsafe in practice if plaintext PII is flowing through prompts, traces, logs, session memory, caches, vector stores, analytics events, or tool payloads.

That is why the matrix needs a separate axis for PII exposure mode. At one end, plaintext PII exists throughout the pipeline. A stronger posture may involve selective masking or redaction, then early tokenization or pseudonymization, then late detokenization only in tightly controlled backend zones where enterprise systems actually need the real values. In more advanced architectures, this can be further strengthened with confidential computing or secure enclaves to protect data in use during sensitive processing.

This lens matters a lot in financial services and insurance because it opens up better options than simply “send raw PII through everything” or “move everything on-prem immediately.” It gives teams a way to reduce exposure materially while still delivering customer-facing capabilities at speed.

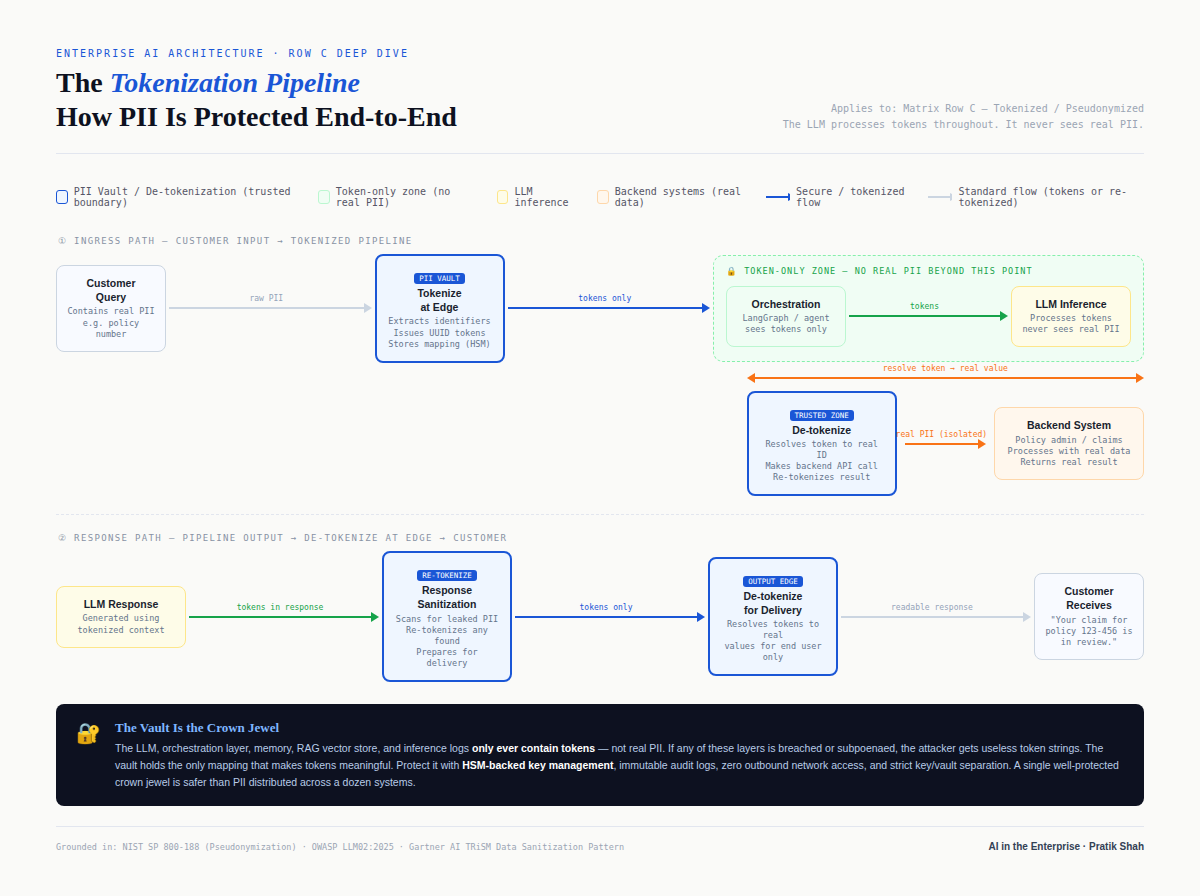

The pattern that stood out most: early tokenization and late detokenization

As I refined the model, one pattern kept standing out as especially practical for regulated environments: tokenize or pseudonymize sensitive data as early as possible, move tokens through the AI pipeline wherever feasible, and only detokenize inside secure backend zones when enterprise systems actually need the real values.

Framework by author, Visual: Claude

In practice, that means the customer’s input enters the system, sensitive fields or entities are identified, and those values are tokenized near the ingress point. The orchestration layer, agent runtime, and model interactions operate on tokens or protected representations instead of raw identifiers whenever possible. When a backend policy or claims system needs the real value to execute a business function, a tightly controlled service inside a secure zone performs detokenization, executes the backend interaction, and then the response is protected again before passing back through intermediate layers. At the edge, only the final presentation layer detokenizes what must be shown to the user.

What I like about this pattern is that it changes the blast radius. It does not eliminate risk, but it can materially improve the architecture’s ability to limit where plaintext PII exists. It also gives teams a much clearer story for architecture governance, security review, and compliance discussions because they can explicitly show where sensitive data appears, where it is transformed, and where access is tightly controlled.

At the same time, it is not a magic solution. Tokenization introduces new crown jewels: the token vault and key management become critical infrastructure, and if those are weak, the pattern weakens quickly. Unstructured data detection also becomes a major factor because missed entities in free text, transcripts, or attachments can still leak real PII into logs or prompts. And pseudonymization is reversible by design, so it should not be treated as the same thing as anonymization. The point is risk reduction and architectural control, not a shortcut around governance.

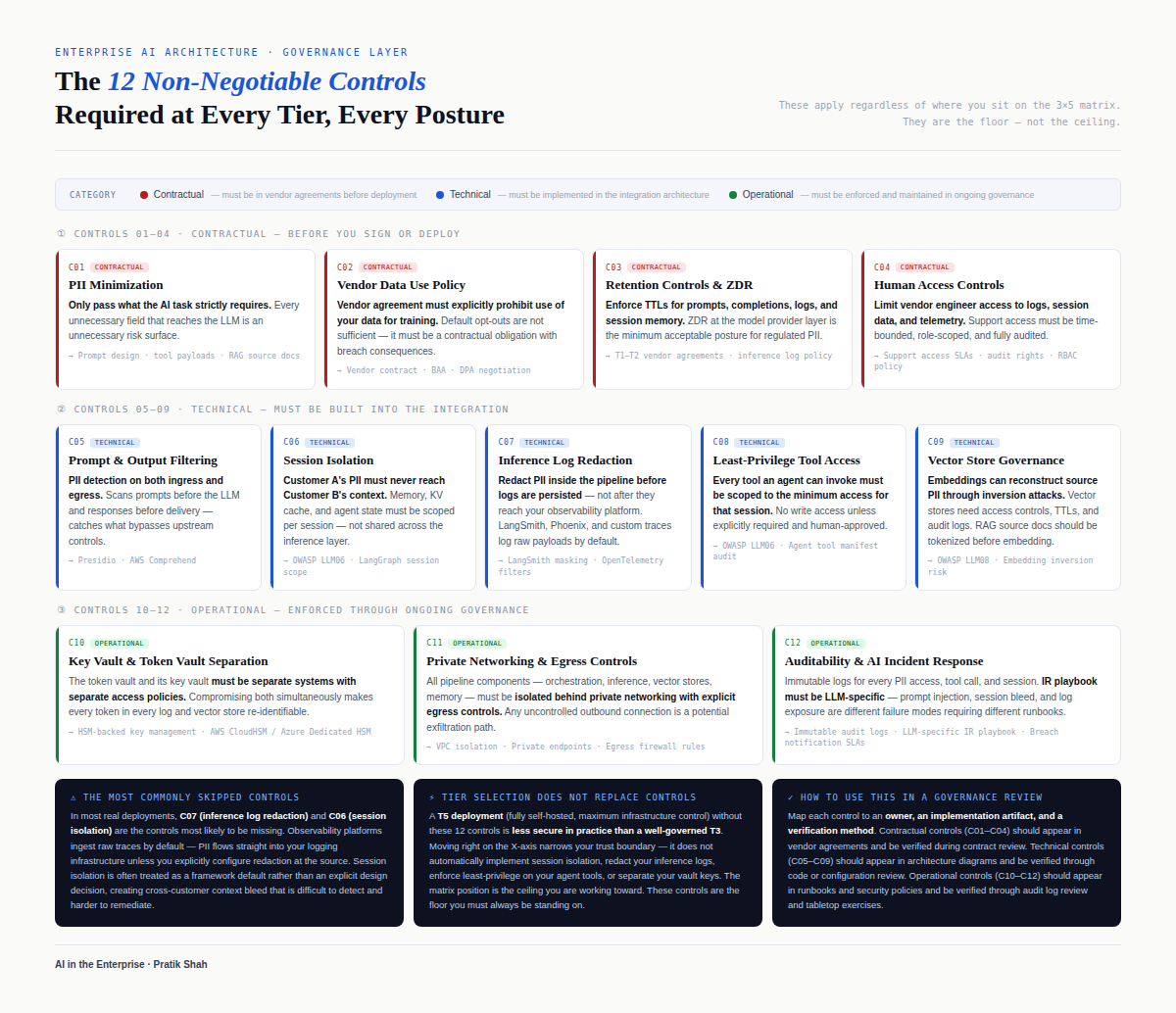

What usually gets missed: the non-negotiable operational controls

One of the biggest lessons from this research and modeling exercise is that teams often spend a lot of time debating public versus private models while underestimating the controls that actually fail in production. In many systems, the most realistic leakage paths are not the model endpoint itself. They are logging pipelines, traces, debug tooling, session memory, vector stores, replay tools, analytics events, and overly broad tool access inside agent workflows.

That is why the spectrum/matrix also includes a cross-cutting layer of non-negotiable controls. These are not hardening items to “add later” once the pilot works; they are the controls that determine whether a promising architecture stays safe under real traffic and real operational pressure.

Framework by author, Visual: Claude

PII classification and minimization starts with agreeing on what is actually sensitive in your business context, including direct identifiers, quasi-identifiers, and domain-specific fields. Once that is defined, the architecture should be designed to collect, process, and retain only the minimum data needed for the use case, instead of pushing full records through the pipeline by default.

Retention and training posture means understanding exactly what is retained by each component in the chain and for how long, from SaaS layers to model providers to logs and caches. It also means confirming training/default data usage settings explicitly, so teams are not relying on assumptions that may not match the product tier, configuration, or contract.

Prompt and output filtering is necessary because both the inbound and outbound paths can become leakage points. Inputs should be normalized and screened to reduce prompt injection and unnecessary sensitive content, while outputs should be validated so the system does not reveal sensitive data, invented policy details, or unsafe responses.

Memory and session isolation matters because conversation context is useful but dangerous if not bounded correctly. Session state, summaries, and memory artifacts must be isolated per user/session/tenant, and teams should be explicit about what content is even eligible to be stored in memory in regulated workflows.

Logs and traces redaction is one of the most important controls in practice because observability systems often become accidental data lakes for sensitive content. Redaction and masking need to happen before data is written to logs or traces, not later, and this includes prompts, tool payloads, stack traces, and error bodies.

Vector store and cache governance is essential if embeddings, retrieval indexes, or response caches are part of the design. Teams need rules for what can enter those stores, what must be tokenized or excluded, how long data stays there, how deletion works, and how tenant isolation is enforced.

Least-privilege tool access is critical in agentic systems because tools are often the path to real actions and real data, not just read-only lookups. Each tool should have narrow permissions, strong authorization checks, validated inputs and outputs, and auditable execution identities rather than broad “agent can do everything” access.

Key vault and token vault separation becomes especially important when tokenization is part of the architecture. The token vault and key management system are high-value targets, and separating these control planes from ordinary application storage and secrets reduces blast radius and supports stricter monitoring and access controls.

Private networking and egress control matters because even a good model/runtime choice can be undermined by uncontrolled traffic paths and outbound connectivity. Teams should define approved endpoints, restrict egress where possible, and use private connectivity patterns so sensitive data is not moving through unnecessary or weakly governed routes.

Human access and support controls are often overlooked because teams focus on runtime behavior and forget operational access paths. In reality, a lot of exposure happens through support, debugging, and admin consoles, so organizations need strong controls around who can view interactions, logs, and traces, with approvals, just-in-time access, and auditing for privileged users.

Auditability and traceability are non-negotiable in regulated environments because saying controls exist is not the same as proving they were followed. Teams need evidence showing what happened, when, and under which identity when data was accessed or actions were taken, especially in agentic flows where multiple components may act in sequence.

Incident response readiness for AI/agentic pipelines is the final piece that often gets pushed too late. Teams need a playbook that explicitly covers AI-specific failure modes—such as prompt injection causing tool misuse, sensitive data overexposure in logs, or unintended persistence in memory/retrieval layers—so they can detect, contain, and communicate quickly when something goes wrong.

This is the part of the conversation where architecture becomes operational reality. A clean diagram is not enough. Teams need to define exactly what data is allowed in logs, what memory can persist, what tools agents are allowed to invoke, what gets retained and for how long, and who can access what during support or incident handling. If those controls are not explicitly designed and enforced, the deployment model alone will not save the system.

Why I created the matrix and what I hope it helps with

I did not build this spectrum and matrix to create a perfect scorecard or a vendor ranking. I built it to create a practical decision framework that helps people have better conversations.

In real enterprise settings, architecture decisions involve security teams, compliance teams, product leaders, platform engineers, enterprise architects, and business sponsors. Each group focuses on a different part of the problem. The matrix helps bring those pieces into one view.

It gives security teams a way to discuss exposure and controls without collapsing the topic into simple labels. It gives architecture teams a way to compare deployment options and data-flow patterns more honestly. It gives business leaders a way to ask better questions and evaluate trade-offs. And it gives implementation teams a clearer signal that “private model” is not the finish line if the rest of the pipeline is still leaking sensitive data through operational channels.

I also built it because I needed one myself. There is a lot of information in this space, and it is easy to lose track of what belongs where. Building a mental model helped me connect AI risk guidance, privacy controls, application security practices, and enterprise architecture patterns into something I can actually use in design and review conversations.

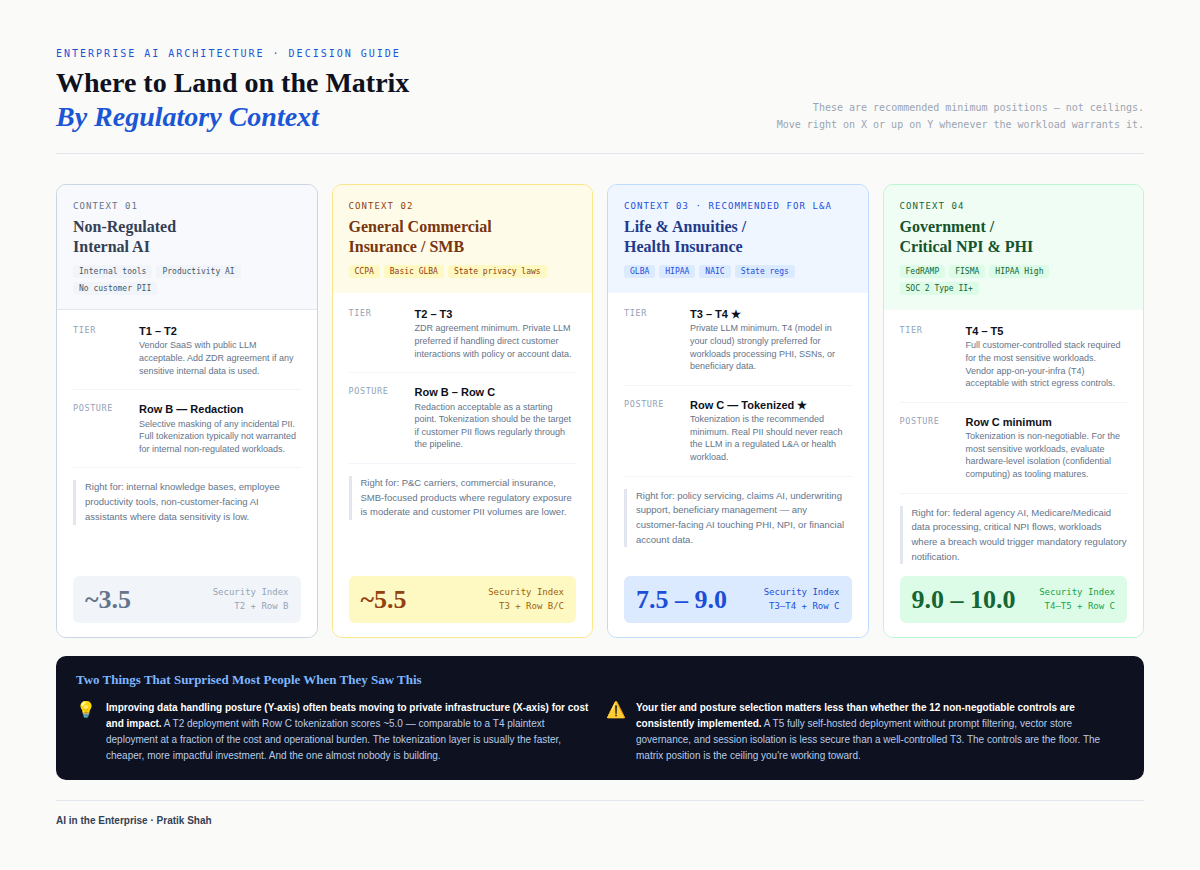

How to use this in practice

The best use of this matrix is not as a final answer, but as the starting structure for a deeper review. When evaluating an LLM or agentic customer service architecture in a regulated environment, I now find it useful to map the proposed solution across both dimensions at the same time: where it sits on the custody and trust-boundary spectrum, and what the data exposure mode looks like through the actual pipeline. Once that is clear, the next step is to examine the non-negotiable controls and look for gaps.

Here’s the decision guide:

Framework by author, Visual: Claude

This approach moves the conversation away from broad claims and toward evidence. Instead of asking only whether a vendor uses a public or private model, teams can ask where plaintext PII exists, who can see it, what gets logged, how memory is handled, how tool access is constrained, what retention defaults are in place, and what proof exists that these controls are actually working. Those are the questions that hold up better under security and compliance scrutiny.

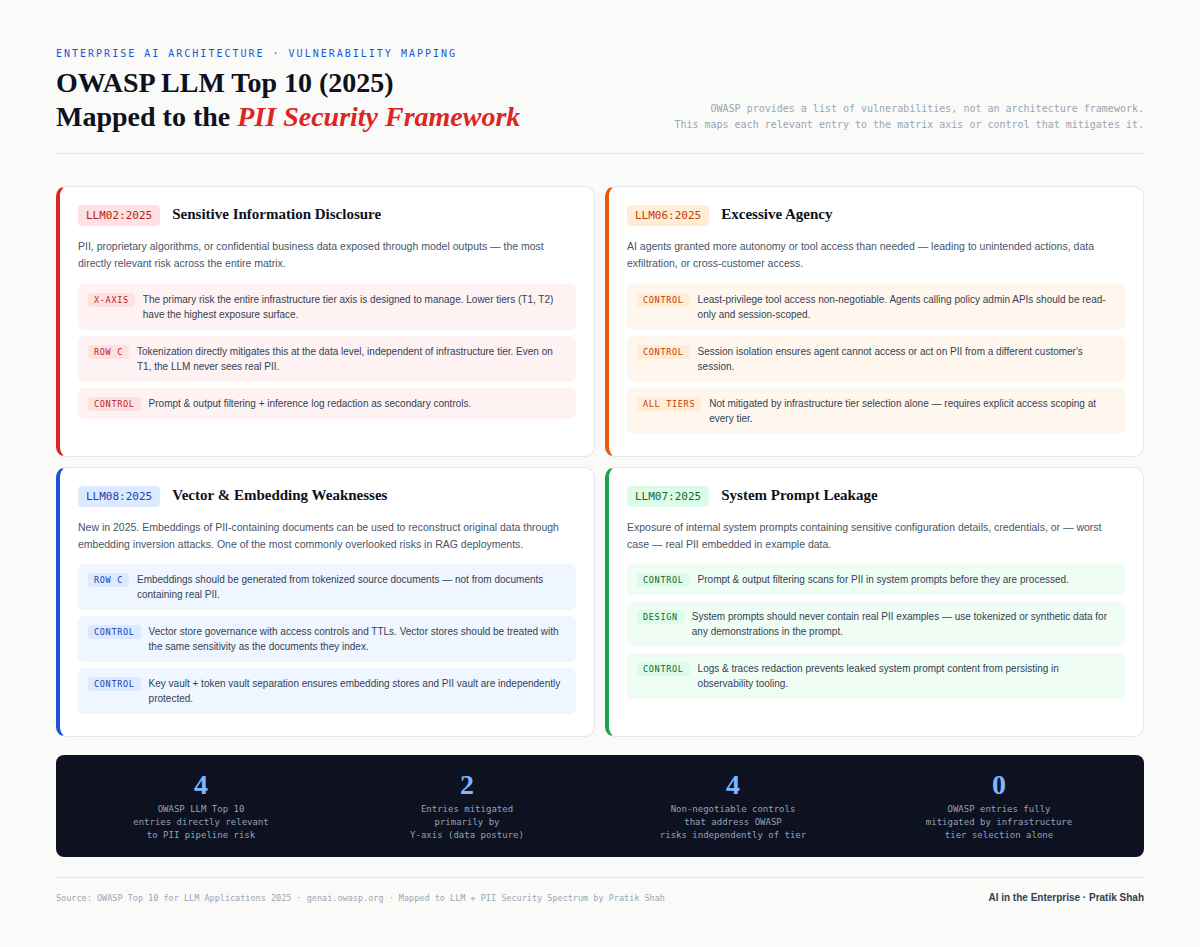

OWASP LLM Top 10 Mapping to the PII security framework

Here is how this security framework maps to OWASP LLM Top 10.

Framework by author, Visual: Claude

Final thoughts

I’m sharing this because I think many of us in financial services and insurance are dealing with the same challenge: how to deliver meaningful AI-enabled customer experiences while protecting sensitive data in a way that is credible, defensible, and practical. The technology is moving fast, but the standards for trust in regulated industries have not changed. If anything, they matter more now.

This spectrum and matrix is my attempt to make that problem easier to reason about. It is not meant to replace formal threat modeling, legal review, or compliance processes. It is meant to help teams think more clearly, design more intentionally, and avoid the common trap of oversimplifying a very real and complex security problem.

I’m still refining the model, and I expect it will evolve as patterns mature and more implementation evidence becomes available. But this version has already helped me organize the space much better, and I hope it is useful to others working through similar architectures.

If you are working on LLM or agentic solutions in insurance or financial services, especially where third-party SaaS and enterprise backend systems are both involved, I’d be interested in how your team is approaching the trade-offs. The most useful conversations I’ve had so far have come from comparing actual architecture decisions, not just theoretical positions.

References / Further Reading

NIST AI RMF (AI Risk Management Framework): https://www.nist.gov/itl/ai-risk-management-framework

NIST AI 600-1 (Generative AI Profile): https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.600-1.pdf

NIST SP 800-122 (Guide to Protecting the Confidentiality of PII): https://nvlpubs.nist.gov/nistpubs/legacy/sp/nistspecialpublication800-122.pdf

OWASP Top 10 for LLM Applications: https://owasp.org/www-project-top-10-for-large-language-model-applications/

CSA AI Controls Matrix (AICM): https://cloudsecurityalliance.org/artifacts/ai-controls-matrix

Google Cloud Sensitive Data Protection – Pseudonymization: https://cloud.google.com/sensitive-data-protection/docs/pseudonymization

Microsoft Azure Confidential AI / Confidential Computing: https://learn.microsoft.com/en-us/azure/confidential-computing/confidential-ai

OpenAI API – Your Data: https://platform.openai.com/docs/guides/your-data